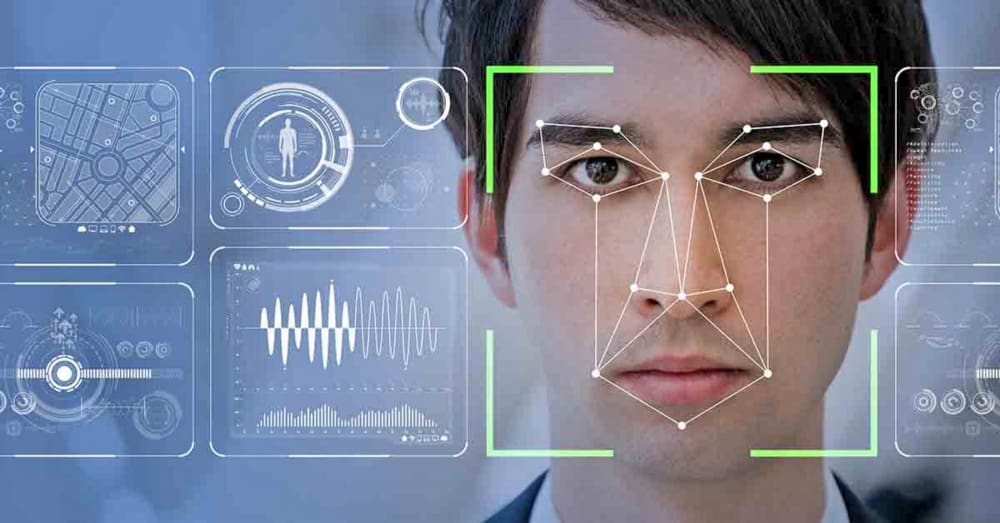

Would you feel comfortable if someone was monitoring you, your family, and your neighbors in your neighborhood through facial recognition? What if there was no way to know what information they were collecting, or how it would be used?

If your answers to those questions were “no” and “hell no” respectively, then listen to this:

The Department of Housing and Urban Development does not keep track of how facial recognition tech is used in public housing. Like, at all. Nor have they researched how facial recognition is used, or instituted policies to control its usage.

HUD leaves that responsibility to the individual authorities that oversee housing programs.

Complexes that put up facial recognition cameras have been met with protests, but tenants don’t have widespread federal protections to back them up – yet.

Facial recognition is an exploding and lucrative industry that is completely fraught with controversies and concerns. Here’s the biggest ethical elephant in the room – the current industry offerings still consistently struggle to correctly identify the faces of folks who are not white men. Black women are often the hardest for facial recognition software to identify, and while accuracy among transgender individuals has not been widely studied, one can speculate that it would be pretty poor too.

This bears a philosophical resemblance to putting police and metal detectors in underfunded public schools, a practice that a growing number of experts say is actively destructive to students. It invites problems with the law where there would otherwise be none, leaving permanent barriers in the lives of ordinary people.

Criminal allegations, even false or trivial ones, can carry dire consequences for individuals and families in public housing. One could face civil asset forfeiture or eviction, or be cut off from other government benefits and relief programs in the future, to list a few. These are already statistically bigger problems in the lives of non-white non-men, so facial recognition is well-positioned to exacerbate existing inequality.

This story is just one of the latest examples of the recklessness that follows facial recognition, as well as those who supposedly regulate it, at every turn. For instance, Clearview AI is a new facial search engine that scrapes all publicly available image data for faces, including photos others may have taken and posted without your knowledge. One by one, Clearview builds up vast image repositories that can go back for years, and then makes those repositories available to be searched by law enforcement. The company has been accused of ending “privacy as we know it.”

These products, while undoubtedly impressive, have a high potential for misuse. Living in public housing is not a crime, and performing intimate surveillance on innocent people against their will is highly unethical. Everyone deserves a say in what goes on where we live, regardless of what we’ve got in the bank.

HUD has no excuse, and they should have taken decisive action long ago. But hey, late would be better than never.

This story was first published here in July 2020.