Sci-fi comes to life

The line between science fiction and science is getting thinner every second and this reality is becoming more evident every day with new technology. At the forefront of turning far fetched dreams into pragmatic applications is Google.

Google has their hands in everything, always exploring the inevitable. They are not only a search engine, but also a dream factory, with the capital to do anything that is possible come true.

![]()

Do you remember the movie (and now tv show) Minority Report? I am talking about the scene where Tom Cruise is interfacing with his computer using hand gestures instead of a keyboard. Now that is not only the stuff of Hollywood, but an actual technology close to being introduced with the public through Google’s new Soli Project.

How The Soli Project works

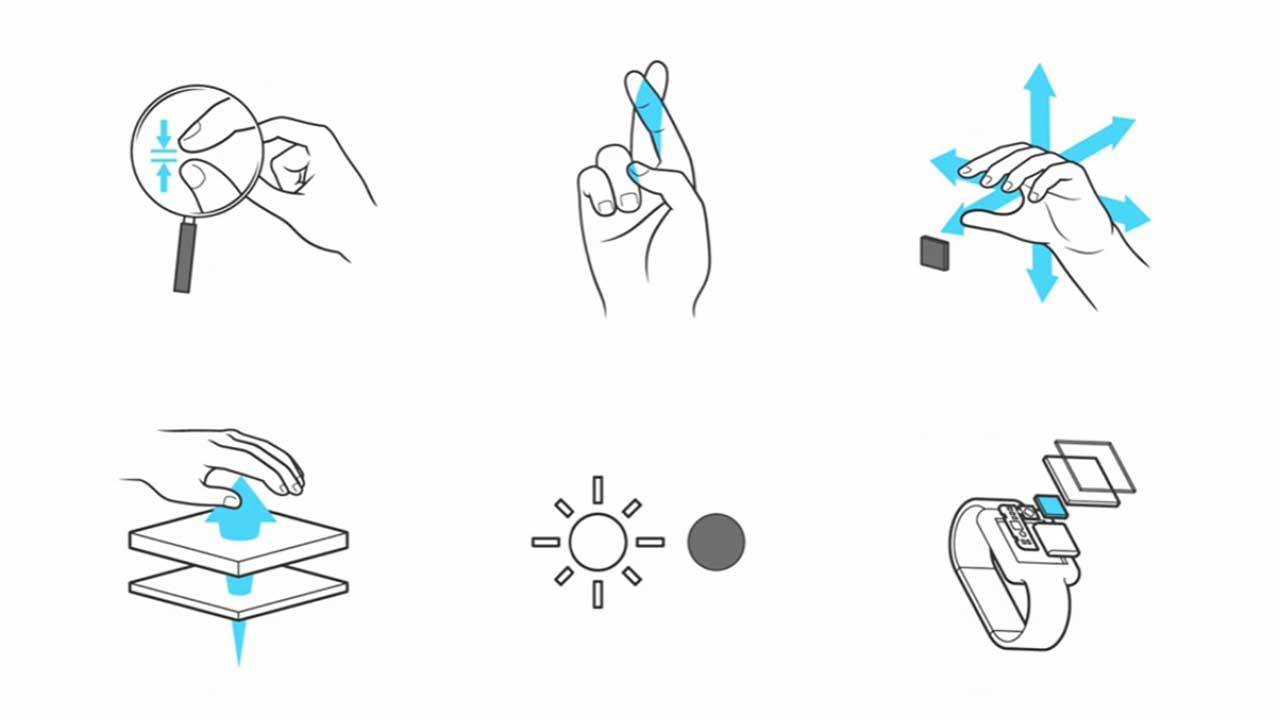

The Soli Project works on the basis using a simple, intuitive hand gesture vocabulary to replace familiar interface archetypes of the past; like the volume knob, or the button. It works using the technology of radar to read hand gestures at the rate of 10,000 frames per second.

What is amazing is that the radar has been condensed into a chip that has no moving parts, and is basically the size of an sd memory card. By making certain movements like emulating the push of a button, or turning a volume knob you are able to interface with your smart phone, wearable or laptop.

According to Ivan Poupyrev, Project Soli Founder, “What makes this project so promising: it’s extremely reliable. There is nothing to break, no moving parts, no lenses, just a piece of sand on a board.” Expect to being hearing more about Project Soli in the near future.

Quick video explains more in depth:

#GoogleSoli

John Linneman is a Portland, Oregon native who owns and operates small digital marketing business. He went to school at Portland State where he studied business, and writing. He majored in writing and theater at PSU, and still holds these things true, but has since moved on and transferred his talents to the business, and marketing world. Connect with him on Twitter or on his blog.